Chess engine uci endgame tablebase arena

The values change during the course of the game.

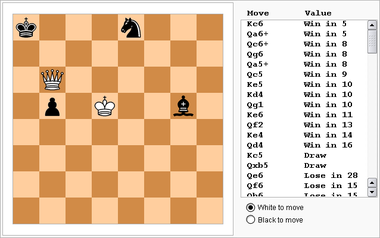

A higher value for the square means a general better position for that piece. The simplest form of positional knowledge are Piece Square Tables (PST), in which a value is assigned to every square on the board for every piece. It also needs some elementary positional knowledge to play reasonable chess. Playing with only that knowledge is (still?) not enough to let PeSTO play reasonably good chess, although it performs very good in tactical testsuites like WAC. The most elementary chess knowledge is the relative value of the chess pieces. PeSTO is an “experimental” chess engine in that it has only minimal chess knowledge in it’s evaluation and that it depends on the AB search as much as possible as opposed to the recent development of Neural Network engines which rely foremost on complex learned chess knowledge. Right now PeSTO is participating in the Qualification League of TCEC S17. I’m not yet where I want to be with the first “official” release of a NNUE rofChade, but I’m getting closer…. I hope there is a bug in the NNUE recalc software, otherwise I will have to start with different recalc strategies. I did a recalculation of the dataset with the first NNUE network, but after some 10 days of recalculation with 126 threads, the results of the training are disappointing. In the meantime I was able to get around 40 more elo out of the current dataset with the old evaluation, by finetuning the trainer parameters. This test version runs in Frank Quisinsky FCP Tourney-2022, he was kind enough to let the initial version run in the tournament! You can find the tournament here: Thank you for making the trainer available for everybody!Īfter some initial issues I was able to create a “standard” HALFKA network trained on the 1B positions and the result was already impressive. Eventually I decided to use Stockfishes pytorch trainer for the training part and started training. With these tools I created 1 Billion positions, and recalculated the positions with the old evaluation of rofChade with depth 10, but could’t find the energy to start working on a trainer.Īs happened before, the upcoming CSVN tournament (which in the end was canceled due to new covid regulations in the Netherlands) was the final push to really start with a trainer. I already started this summer with rewriting the NNUE support in rofChade, and developed some tools for generating positions, recalculating positions and recalculating the gameresult of game positions. Everybody else who enjoys (computer) chess!įinally I have found the energy to work on the first NNUE release of rofChade.The TCEC and CCC team to organize great tournaments.Graham banks and the rest of the CCRL team for doing a great testing job for so long.IpmanChess for testing some intermediate rofChade versions.Frank Quisinsky for letting intermediate rofChade versions play in it’s tournament.Jon Dart for the fathom library and updates on it.It helped me to discover some unexpected FRC bugs Andrew Grant for sharing his experience with his FRC implementation and perft test data.

#Chess engine uci endgame tablebase arena code

The NN code is implemented from scratch, the matrix calculations are heavily influenced by the Cfish implementation. The positions have been rescored a few times with intermediate networks to simulate Reinforcement learning. The network is trained with 2.8B by rofChade generated positions. For now, this still gives the best results for rofChade. The current NN network architecture is HALFKA (2×256)x32x32x1. I’ve been doing a lot of NN training for TCEC season 22, which eventually resulted in an unbelievable 4th place in the premier division! The infra final for 3th place against Lc0 showed that there is still a mayor gap between the top 3 engines and the rest including rofChade, but still an amazing result.Īfter the tournament I didn’t have much time to work on rofChade anymore, so it took some time to release the first NN version of rofChade, but here it is!